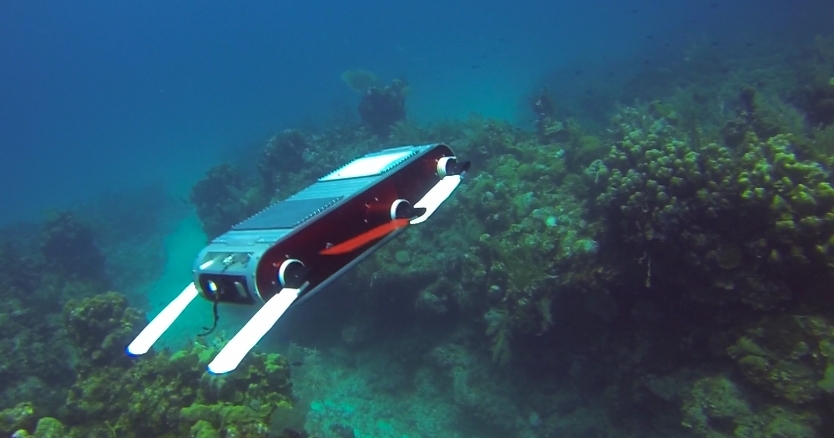

In this project we have developed a method for vision-based place recognition in environments with a high content of similar features and that are prone to variations in illumination[1]. Particularly, we have focused on underwater environments[2]. The high similarity of features makes difficult the disambiguation between two different places. This method relies on using the Bag-of-Words (BoW) approach to derive an image descriptor from a set of relevant regions, which are extracted using a visual attention algorithm. We have named our approach Bag of Relevant Regions (BoRR). The descriptor of each relevant region is built by using a 2D histogram of the chromatic channels of the CIE-Lab color space.

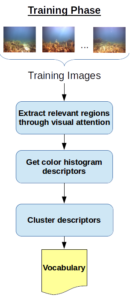

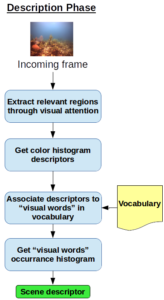

The proposed approach has two phases:

- Training phase: a visual attention algorithm is used to identify the most relevant region in a set of images. The features are clustered by similarity in terms of theirs 2D color histograms. For each cluster a representative feature (visual word) is taken to build a vocabulary of visual features.

- Description phase: the relevant features of a given image are obtained by using the visual attention algorithm. Then, each feature is associated to the corresponding feature in the vocabulary. Finally, an histogram of occurrence of each visual word is calculated and used as the image descriptor.

We have compared our method against a Bag-of-Words with SURF descriptors and FAB-MAP (a state-of-the-art method) on images taken from underwater environments. Our proposed approach has obtained better results in most of the cases.

References

[1] A. Maldonado-Ramírez and L. A. Torres-Méndez, “A Bag of Relevant Regions for Visual Place Recognition in Challenging Environments,” International Conference on Pattern Recognition, Cancún, 2016.

[2] A. Maldonado-Ramírez and L. A. Torres-Méndez, “A Bag of Relevant Regions Model for Visual Place Recognition in Coral Reefs,” 2016 MTS/IEEE OCEANS, Monterey, 2016.

Recent Comments